To experiment how to collect student information when student rooster is not predefined, reading Crowdmark cover page with locally installed Llama 3.2 Vision 11B was attempted to evaluate the accuracy of OCR function from the model.

The image is one of typical Crowdmark cover page with QR code and the area for the student first and last name, and student id, which is worded as participant ID below. Most of information is blurred since it is a real cover page for that contest. So, you may not be able to see how bad or good student’s handwriting was.

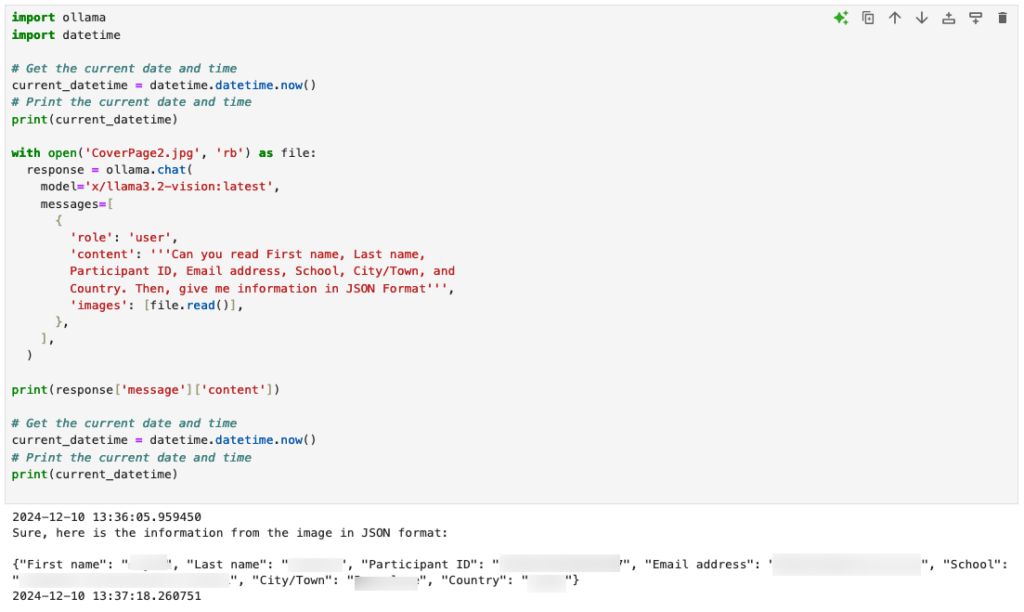

The prompt was requesting specific information from the cover page and return them in JSON format as below. Those provided information were accurate. Since this model was running in my local machine with Ollama, it took around 13 seconds to get response. The results are returned as below.

Python source codes used can be found below.

import ollama

import datetime

# Get the current date and time

current_datetime = datetime.datetime.now()

# Print the current date and time

print(current_datetime)

with open('CoverPage2.jpg', 'rb') as file:

response = ollama.chat(

model='x/llama3.2-vision:latest',

messages=[

{

'role': 'user',

'content': '''Can you read First name, Last name,

Participant ID, Email address, School, City/Town, and

Country. Then, give me information in JSON Format''',

'images': [file.read()],

},

],

)

print(response['message']['content'])

# Get the current date and time

current_datetime = datetime.datetime.now()

# Print the current date and time

print(current_datetime)